IGNOR: Neural Object Rendering with Image Guidance 🎥

Learn a new view synthesis method for realistic 3D object rendering using image-guided neural networks, presented at ICLR 2020.

Matthias Niessner

4.8K views • Nov 28, 2018

About this video

ICLR 2020 Paper Video

Project Page: https://niessnerlab.org/projects/thies2020ignor.html

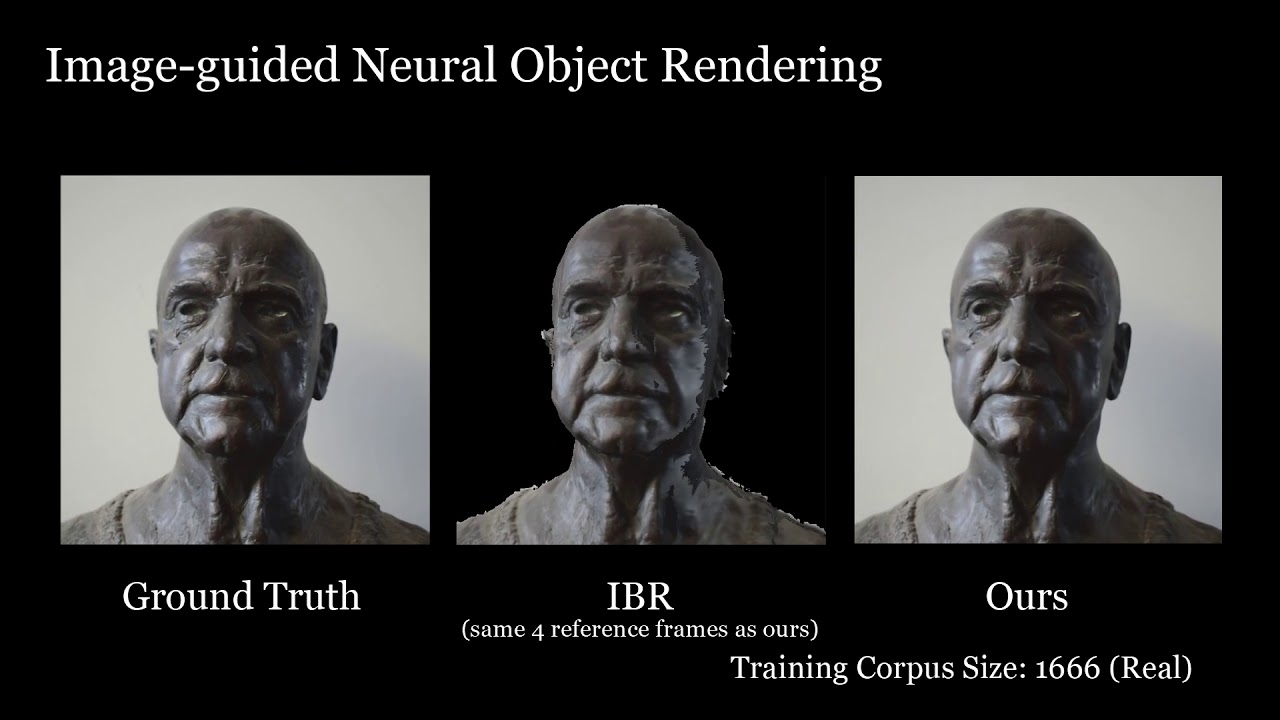

We propose a new learning-based novel view synthesis approach for scanned objects that is trained based on a set of multi-view images. Instead of using texture mapping or hand-designed image-based rendering, we directly train a deep neural network to synthesize a view-dependent image of an object. First, we employ a coverage-based nearest neighbor look-up to retrieve a set of reference frames that are explicitly warped to a given target view using cross-projection. Our network then learns to best composite the warped images. This enables us to generate photo-realistic results, while not having to allocate capacity on ``remembering'' object appearance. Instead, the multi-view images can be reused. While this works well for diffuse objects, cross-projection does not generalize to view-dependent effects. Therefore, we propose a decomposition network that extracts view-dependent effects and that is trained in a self-supervised manner. After decomposition, the diffuse shading is cross-projected, while the view-dependent layer of the target view is regressed. We show the effectiveness of our approach both qualitatively and quantitatively on real as well as synthetic data.

Project Page: https://niessnerlab.org/projects/thies2020ignor.html

We propose a new learning-based novel view synthesis approach for scanned objects that is trained based on a set of multi-view images. Instead of using texture mapping or hand-designed image-based rendering, we directly train a deep neural network to synthesize a view-dependent image of an object. First, we employ a coverage-based nearest neighbor look-up to retrieve a set of reference frames that are explicitly warped to a given target view using cross-projection. Our network then learns to best composite the warped images. This enables us to generate photo-realistic results, while not having to allocate capacity on ``remembering'' object appearance. Instead, the multi-view images can be reused. While this works well for diffuse objects, cross-projection does not generalize to view-dependent effects. Therefore, we propose a decomposition network that extracts view-dependent effects and that is trained in a self-supervised manner. After decomposition, the diffuse shading is cross-projected, while the view-dependent layer of the target view is regressed. We show the effectiveness of our approach both qualitatively and quantitatively on real as well as synthetic data.

Tags and Topics

Browse our collection to discover more content in these categories.

Video Information

Views

4.8K

Likes

95

Duration

4:31

Published

Nov 28, 2018

User Reviews

4.6

(4)