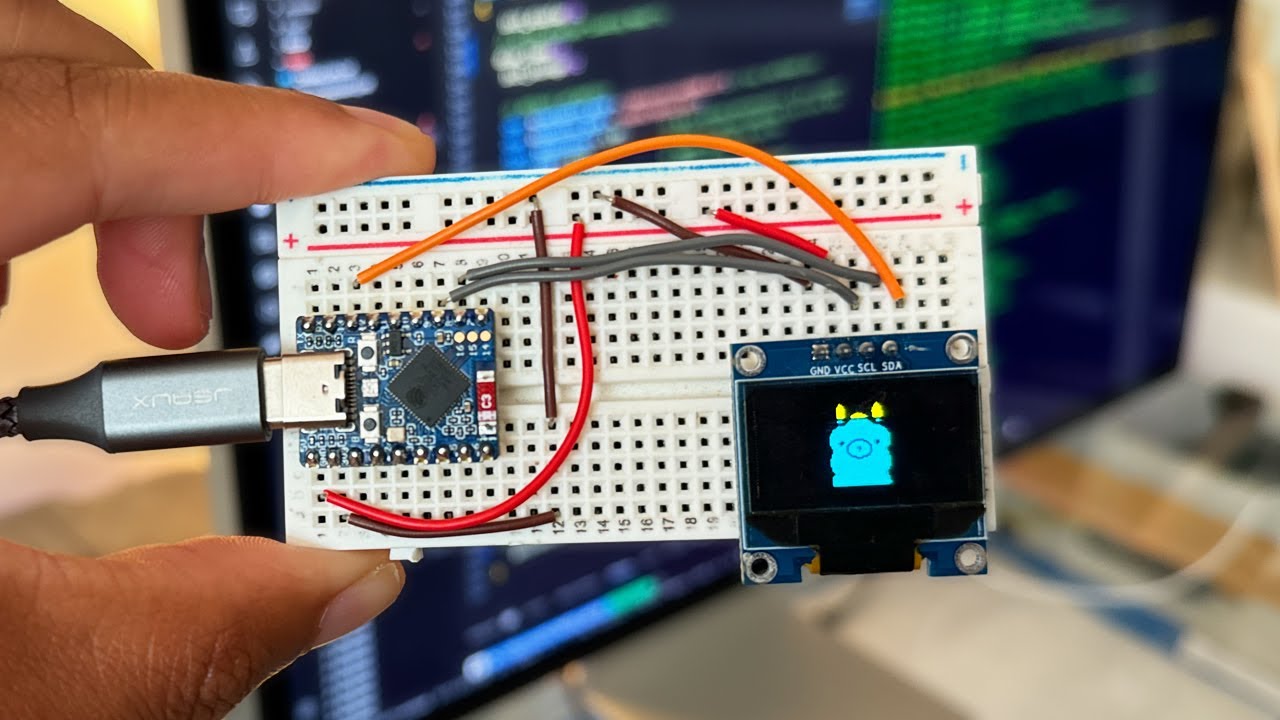

I Ran a Local LLM on the ESP32 – Here's What Happened

In this video, I take on the challenge of running a Large Language Model (LLM) on the ESP32 microcontroller. Using a model based on the tiny stories dataset...

Dave Bennett

30.8K views • Sep 3, 2024

About this video

In this video, I take on the challenge of running a Large Language Model (LLM) on the ESP32 microcontroller.

Using a model based on the tiny stories dataset, with just 260K parameters, I managed to get the LLM running locally on the ESP32-S3.

Github link:

https://github.com/DaveBben/esp32-llm

🔗 Other Useful Links:

ESP32 Board I used (affiliate link): https://amzn.to/3z1hvL1

Tinyllamas repo: https://huggingface.co/karpathy/tinyllamas

llama2.c repository by Andrej Karpathy: https://github.com/karpathy/llama2.c/tree/master

Using a model based on the tiny stories dataset, with just 260K parameters, I managed to get the LLM running locally on the ESP32-S3.

Github link:

https://github.com/DaveBben/esp32-llm

🔗 Other Useful Links:

ESP32 Board I used (affiliate link): https://amzn.to/3z1hvL1

Tinyllamas repo: https://huggingface.co/karpathy/tinyllamas

llama2.c repository by Andrej Karpathy: https://github.com/karpathy/llama2.c/tree/master

Video Information

Views

30.8K

Likes

1.0K

Duration

2:23

Published

Sep 3, 2024

User Reviews

4.6

(6) Related Trending Topics

LIVE TRENDSRelated trending topics. Click any trend to explore more videos.