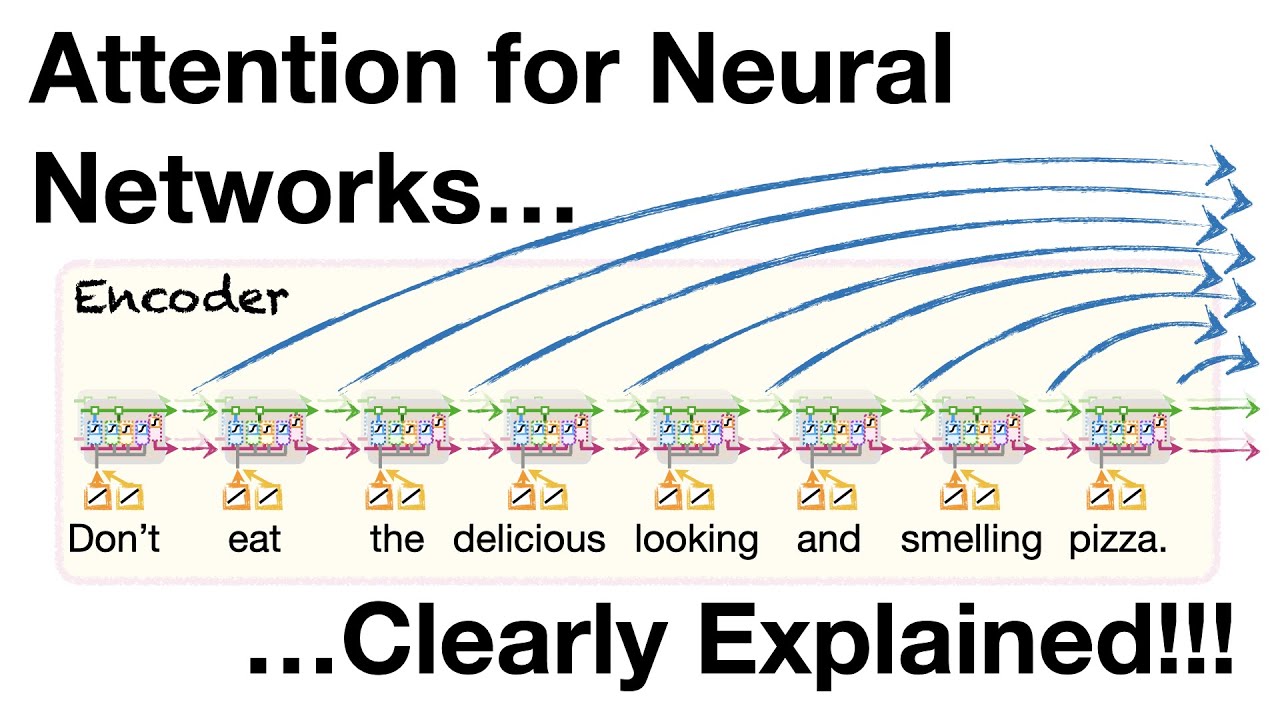

Master Attention in Neural Networks: Simple Explanation for Transformers & ChatGPT 🤖

Unlock the secrets of attention mechanisms in neural networks! Learn how attention powers Transformers and ChatGPT with a clear, easy-to-understand guide.

StatQuest with Josh Starmer

392.6K views • Jun 5, 2023

About this video

Attention is one of the most important concepts behind Transformers and Large Language Models, like ChatGPT. However, it's not that complicated. In this StatQuest, we add Attention to a basic Sequence-to-Sequence (Seq2Seq or Encoder-Decoder) model and walk through how it works and is calculated, one step at a time. BAM!!!

NOTE: This StatQuest is based on two manuscripts. 1) The manuscript that originally introduced Attention to Encoder-Decoder Models: Neural Machine Translation by Jointly Learning to Align and Translate: https://arxiv.org/abs/1409.0473 and 2) The manuscript that first used the Dot-Product similarity for Attention in a similar context: Effective Approaches to Attention-based Neural Machine Translation https://arxiv.org/abs/1508.04025

NOTE: This StatQuest assumes that you are already familiar with basic Encoder-Decoder neural networks. If not, check out the 'Quest: https://youtu.be/L8HKweZIOmg

For a complete index of all the StatQuest videos, check out:

https://statquest.org/video-index/

If you'd like to support StatQuest, please consider...

Patreon: https://www.patreon.com/statquest

...or...

YouTube Membership: https://www.youtube.com/channel/UCtYLUTtgS3k1Fg4y5tAhLbw/join

...buying one of my books, a study guide, a t-shirt or hoodie, or a song from the StatQuest store...

https://statquest.org/statquest-store/

...or just donating to StatQuest!

https://www.paypal.me/statquest

Lastly, if you want to keep up with me as I research and create new StatQuests, follow me on twitter:

https://twitter.com/joshuastarmer

0:00 Awesome song and introduction

3:14 The Main Idea of Attention

5:34 A worked out example of Attention

10:18 The Dot Product Similarity

11:52 Using similarity scores to calculate Attention values

13:27 Using Attention values to predict an output word

14:22 Summary of Attention

#StatQuest #neuralnetwork #attention

NOTE: This StatQuest is based on two manuscripts. 1) The manuscript that originally introduced Attention to Encoder-Decoder Models: Neural Machine Translation by Jointly Learning to Align and Translate: https://arxiv.org/abs/1409.0473 and 2) The manuscript that first used the Dot-Product similarity for Attention in a similar context: Effective Approaches to Attention-based Neural Machine Translation https://arxiv.org/abs/1508.04025

NOTE: This StatQuest assumes that you are already familiar with basic Encoder-Decoder neural networks. If not, check out the 'Quest: https://youtu.be/L8HKweZIOmg

For a complete index of all the StatQuest videos, check out:

https://statquest.org/video-index/

If you'd like to support StatQuest, please consider...

Patreon: https://www.patreon.com/statquest

...or...

YouTube Membership: https://www.youtube.com/channel/UCtYLUTtgS3k1Fg4y5tAhLbw/join

...buying one of my books, a study guide, a t-shirt or hoodie, or a song from the StatQuest store...

https://statquest.org/statquest-store/

...or just donating to StatQuest!

https://www.paypal.me/statquest

Lastly, if you want to keep up with me as I research and create new StatQuests, follow me on twitter:

https://twitter.com/joshuastarmer

0:00 Awesome song and introduction

3:14 The Main Idea of Attention

5:34 A worked out example of Attention

10:18 The Dot Product Similarity

11:52 Using similarity scores to calculate Attention values

13:27 Using Attention values to predict an output word

14:22 Summary of Attention

#StatQuest #neuralnetwork #attention

Tags and Topics

Browse our collection to discover more content in these categories.

Video Information

Views

392.6K

Likes

7.5K

Duration

15:51

Published

Jun 5, 2023

User Reviews

4.8

(78) Related Trending Topics

LIVE TRENDSRelated trending topics. Click any trend to explore more videos.

Trending Now